Today at re:Invent, Amazon is announcing AWS Fargate, a container service that allows you to provision containers in AWS without having to worry about the VM instances for them to run on. We had an early preview, and the opportunity to see how Aqua’s Container Security Platform works to protect containers running in it.

In this post we’ll look at how Aqua can also protect against unexpected behavior at run-time – thus potentially preventing zero-day exploits too.

Containers as a Compute Primitive

You start by creating a cluster, but note that although this is an ECS (Elastic Container Service) cluster you don’t get any visibility to the virtual machines that the containers run on.

$ aws fargate create-cluster

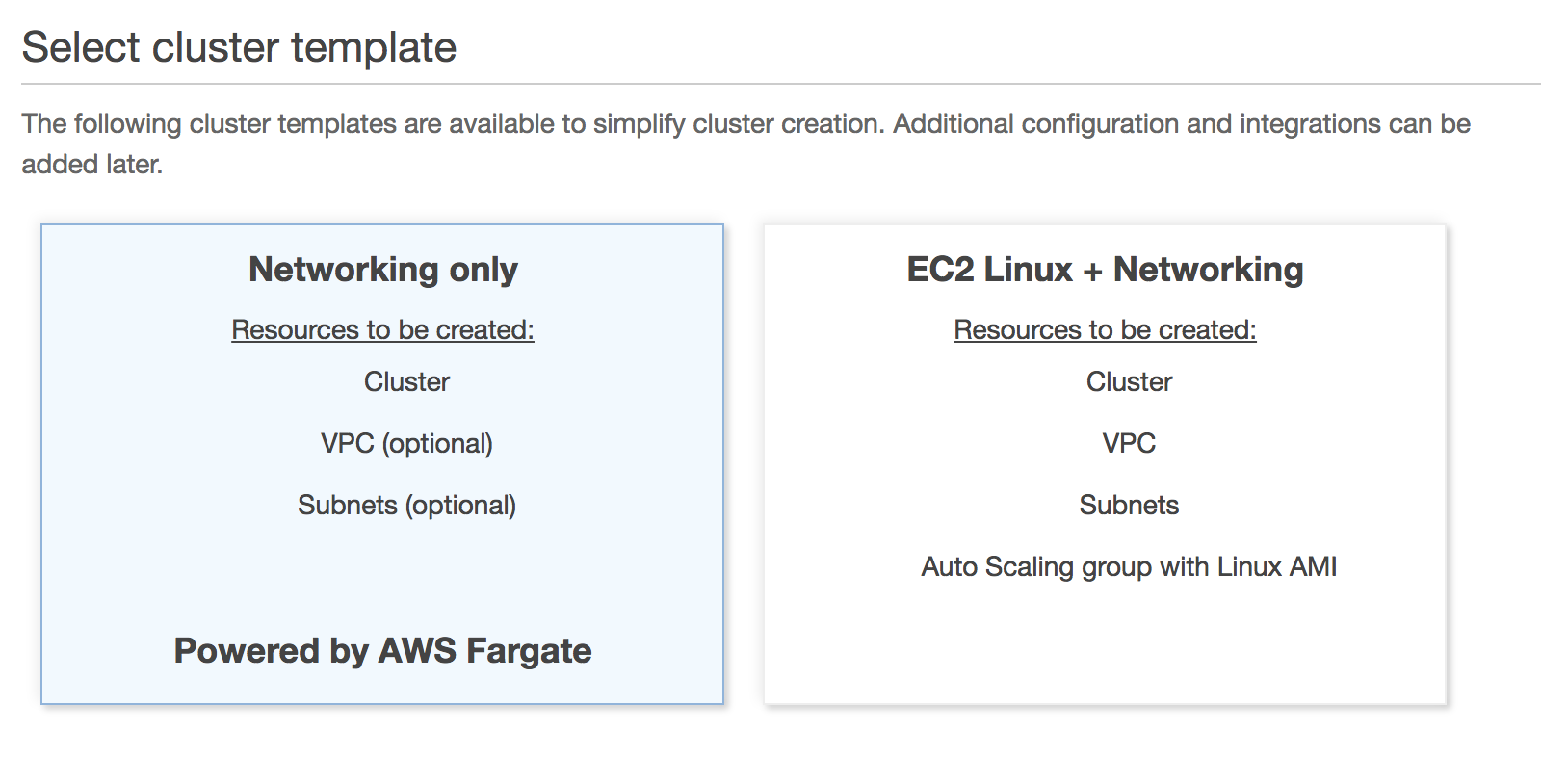

Alternatively, in the ECS Console you can use a new Cluster template for Fargate that can also create a VPC and subnets for the containers to run in. (Read on for more about the networking set-up for Fargate containers.)

Task Definitions, Tasks and Services

Once your cluster is created, you can start registering Task Definitions. Just as for traditional ECS deployments, each task definition specifies the container image, port mappings, and attributes such as the memory and CPU requirements. Here’s an example of the JSON file used to register a very simple web server task. (For more on ECS core concepts, check out this video.)

{

"cpu": ".25 vCpu",

"memory": ".5 GB",

"networkMode": "awsvpc",

"requiresCompatibilities": [

"FARGATE"

],

"family": "hello",

"containerDefinitions": [

{

"name": "hello",

"image": "lizrice/hello:1",

"portMappings": [

{

"hostPort": 8080,

"containerPort": 8080,

"protocol": "tcp"

}

],

"environment": [

{

"name": "WEB_SERVER_PORT",

"value": ":8080"

}

]

}

]

}

The containerDefinitions section of the task definition JSON file is actually a list, so that (rather like a Kubernetes pod) the task definition could container multiple containers.

From a security perspective you’ll want to use vulnerability scanning to make sure that the images you include in the task definitions don’t contain known exploitable issues.

I mentioned port mappings; in Fargate you’ll be using awspvc network mode, which automatically creates an elastic network interface for each task, with its own private IP address. In this mode you’re required to specify the same port for host and container, so it’s less of a mapping, more of an expose instruction.

Once you’ve created a task definition, you can either

- run a task, which instantiates a container based on the definition

- create a service, which starts and maintains a desired number of active containers based on the definition. You can easily scale this up and down by updating the service with a modified desired parameter.

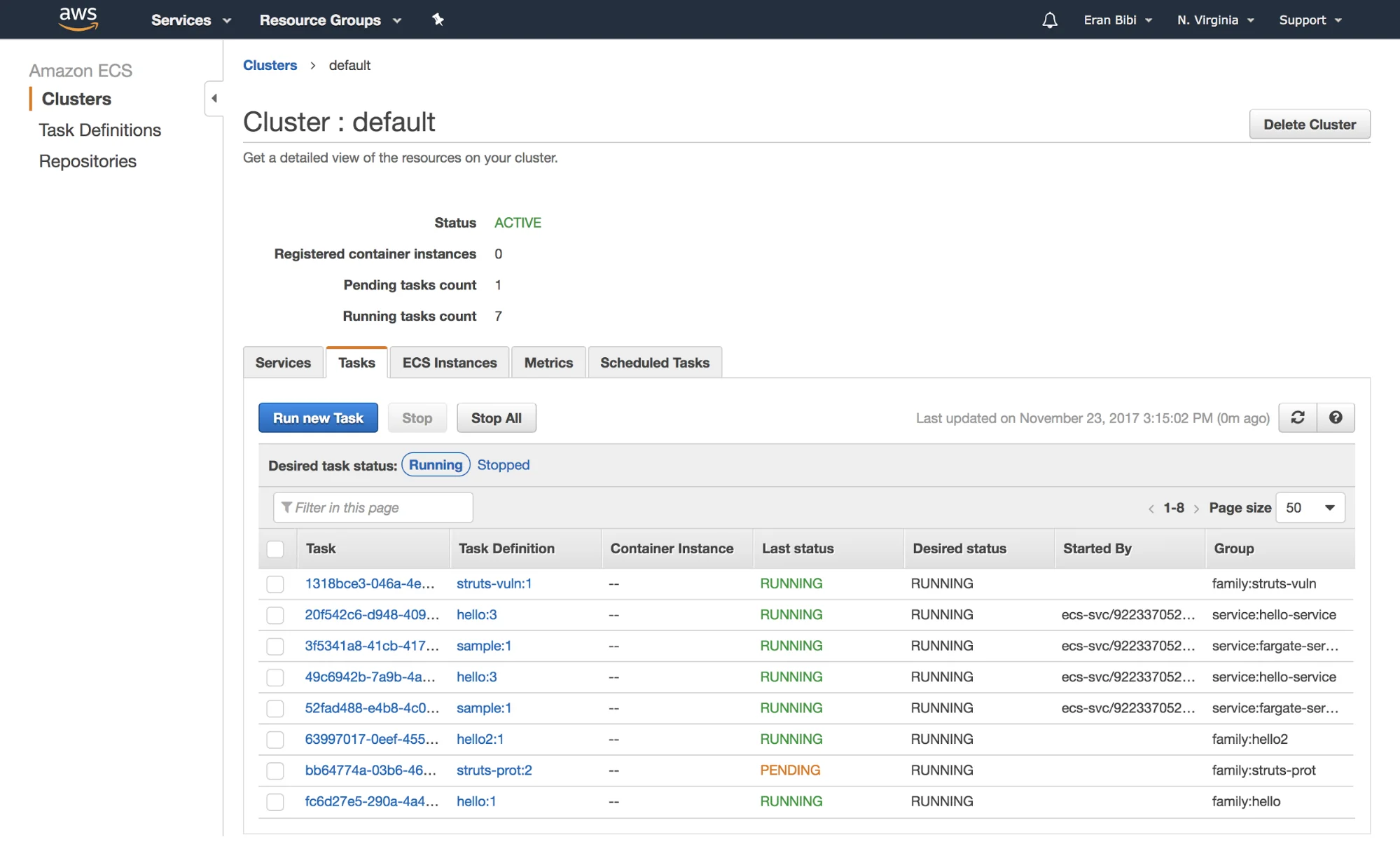

Tasks and services show up in the ECS console.

Network Configuration

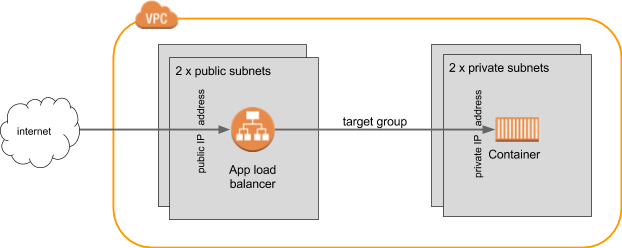

For my container tasks, I’m using a setup that looks something like this:

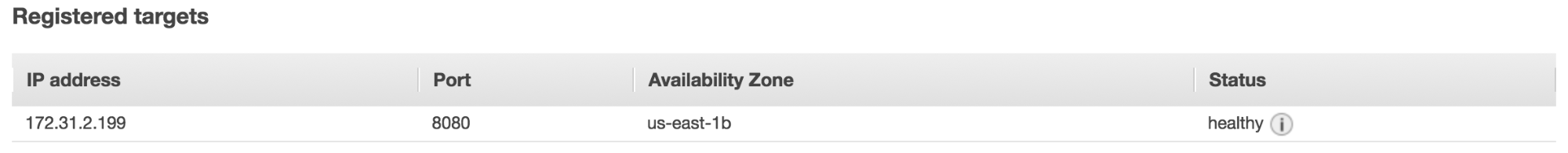

When you run the task or create the service, you define (as part of the awsvpc configuration) that it is attached to the two private subnets. The load balancer itself is internet facing, and associated with two public subnets. It has a target group which targets the (private) IP address of the container.

With this configuration you can check that a container is up and running by looking at the health checks in the target group that points to it. The health check is a configurable endpoint that you know the container will respond to, which the service polls on a regular basis.

As an alternative network configuration, it’s possible to specify that you want a public IP address associated directly with the task or service. This saves having to have the public and private subnets, but it’s a bit of a rigmarole to find the IP address as it’s not included in the task or service description. You get a network interface association, which you then have to query to get the IP address.

Running an Exploitable Image

I’m going to deploy a container image that demonstrates a Struts vulnerability that may have been the loophole allowing attackers to obtain 143 million people’s details from Equifax. We’ll check that we can exploit this version of the code when running as a task in AWS, and then we’ll see how, with Aqua runtime protection on the same code, this exploit wouldn’t work. First let’s create the task definition.

$ aws fargate run-task --cluster default --launch-type FARGATE

--task-definition struts-vuln --network-configuration

"awsvpcConfiguration={subnets=[subnet-4785160c,subnet-ffa962a2]}"

Matching the diagram above, the subnets specified here are the private ones from my VPC. The output (which is quite long) includes the task ID, which we’ll need in order to find the private IP address that gets assigned to this task:

$ aws fargate describe-tasks --tasks <task id>

I also created a load balancer for this task, and a target group for the load balancer which points traffic to the private IP address for the task.

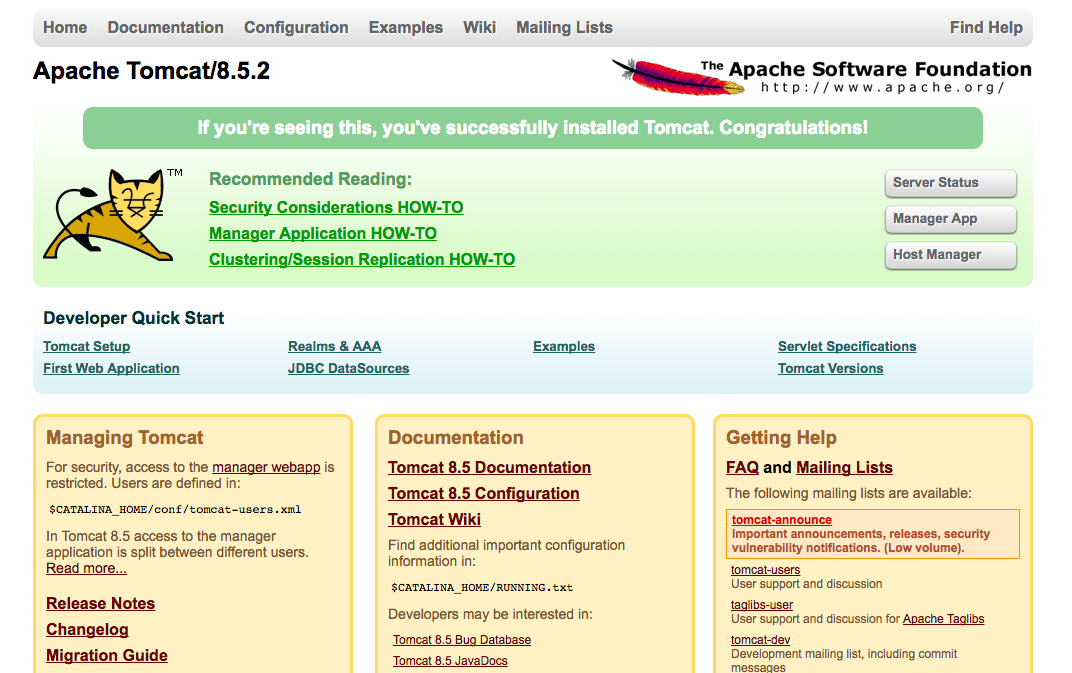

Browsing to the name associated with the load balancer we see a Tomcat test page, so we know it’s up and running.

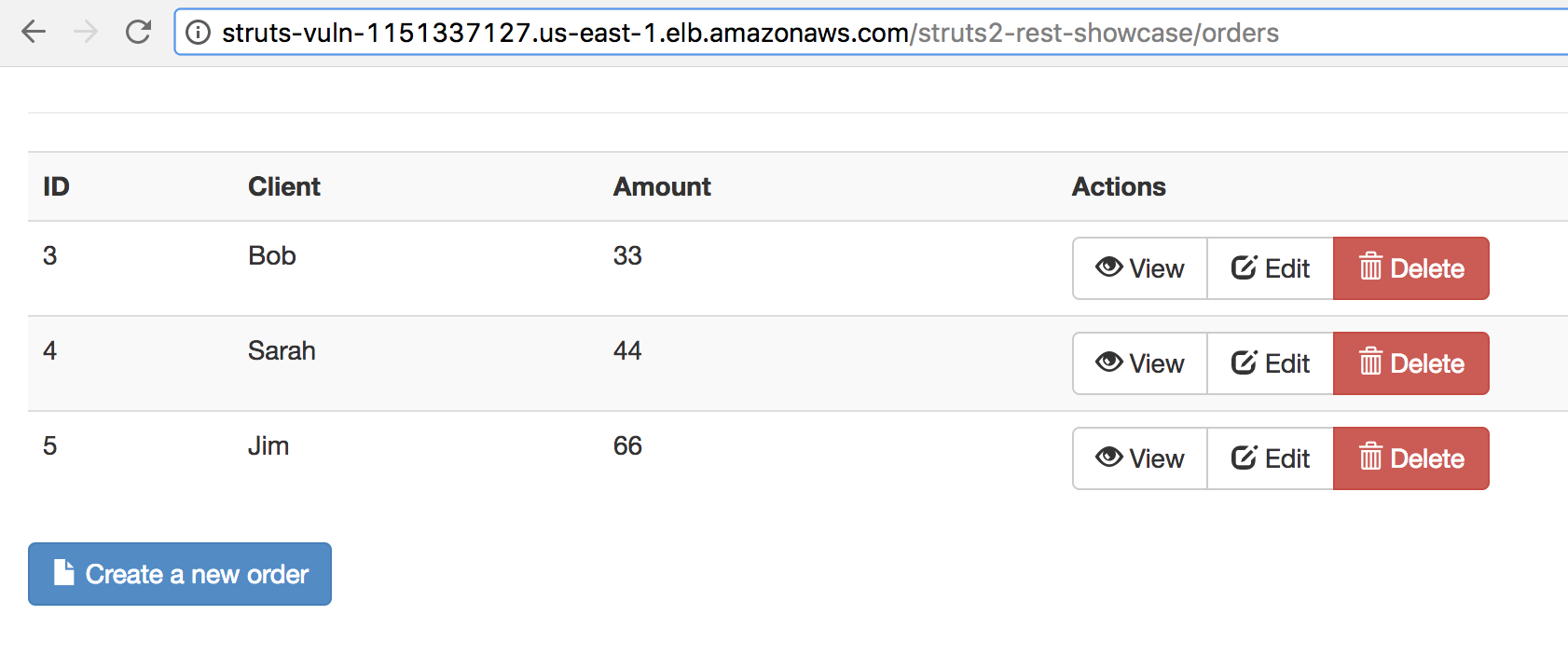

The vulnerability we’re going to exploit, known as CVE-2017-9805, is a flaw in the REST API framework plugin for Struts, so the test image includes some simple CRUD handling for “orders”.

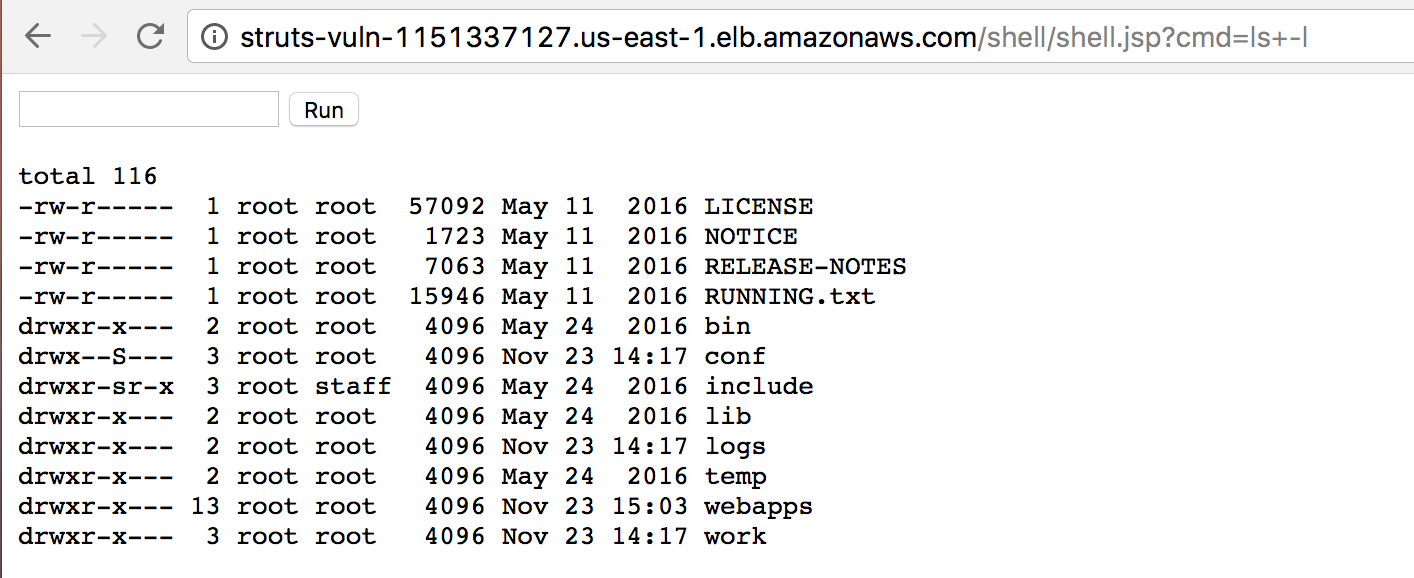

My colleague Sagie Dulcie gave me an “attacker” container (with code based on this) that attempts to upload a web shell component to a given IP address, taking advantage of that vulnerability.

My colleague Sagie Dulcie gave me an “attacker” container (with code based on this) that attempts to upload a web shell component to a given IP address, taking advantage of that vulnerability.$ docker run -it --rm attacker

http://struts-vuln-1151337127.us-east-1.elb.amazonaws.com/struts2-rest-showcase/orders/3

[*] URL:

http://struts-vuln-1151337127.us-east-1.elb.amazonaws.com/struts2-rest-showcase/orders/3

[*] CMD: echo test > /tmp/struts-pwn

[$] Request sent.

[.] If the host is vulnerable, the command will be executed in the background.

[%] Done.

As easily as that, this creates a web shell on the server which anyone can access remotely.

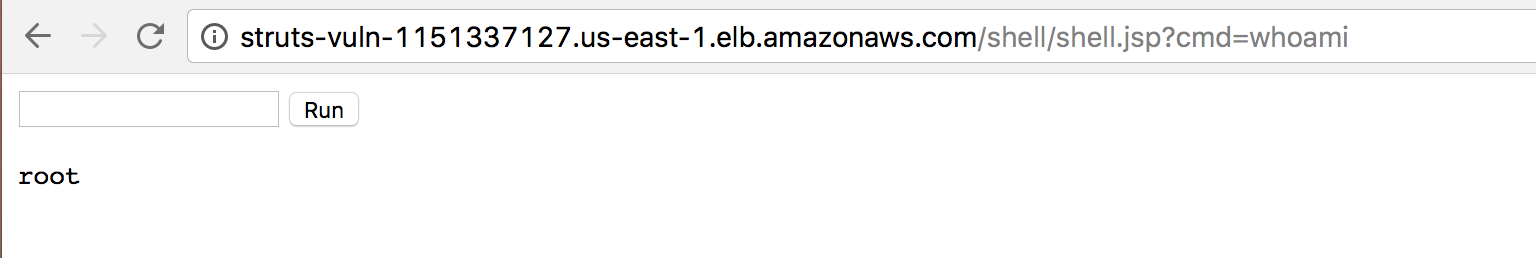

If we run whoami in this shell we can see that we have obtained root access to the server!

If you’re running an Apache Struts framework with the REST API plugin, stop reading this right now and make sure your server is patched against this exploit!

Protecting Code, Wherever it Runs

Today, the typical Aqua customer runs code on a cluster of (virtual) machines that they manage – whether in their own data center, a public cloud like AWS, Azure or Google, or a hybrid. Until now, customers have deployed an Aqua Enforcer container on every node in their cluster. But if you’re using a new “serverless” technologies like AWS Fargate or Azure Container Instances, you don’t know which nodes your code will run on, so how could you deploy the Enforcer?

To resolve this problem, here at Aqua we have been building a new type of enforcer that works in a Container as a Service environments — think of it as wrapping the original image in a protective bubble.

I have built and pushed a protected version of the same vulnerable Struts code, and I register a task definition and deploy this just as before.

Once this container instance is provisioned in AWS, browsing there shows the same Tomcat test page as before. I run the same attacker code on this container instance, which will attempt to install the web shell exactly as before.

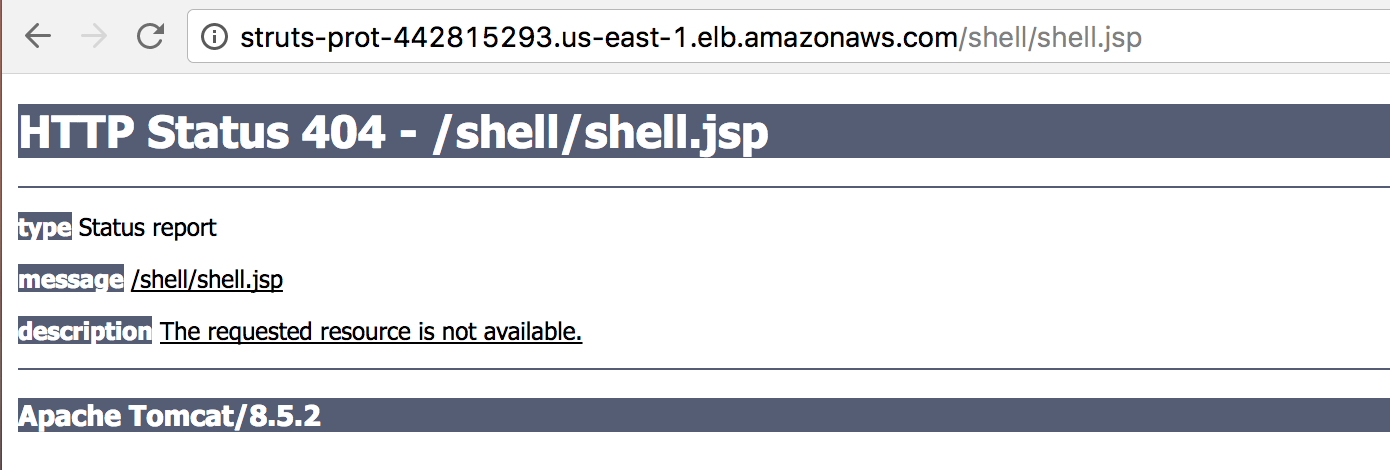

But this time browsing to the /shell/shell.jsp address results in a 404 page, indicating that the web shell wasn’t installed this time.

Looking at the Aqua Server we can see logs indicating that the attack was caught and stopped by the Aqua code running in the container, and that this was reported to the Server as “Block” events.

Aqua ensures that the containerized code can only do what it is expected to do. In this case, we used a profile of normal Tomcat / Struts deployment behavior, and it has caught the attacker trying to execute calls that are outside this profile. In this way, even if the attack targets a zero-day (i.e. currently unreported) vulnerability, there is a decent chance of preventing it. If only Equifax had this level of protection, perhaps all that personal information would still be private.

Why not try out AWS Fargate and enjoy running containers on AWS without managing EC2 instances?