A few months ago we launched the Aqua MicroEnforcer, the first solution for providing runtime protection to a container running in Containers-as-a-Service platforms like AWS Fargate or Azure Container Instances. The mechanism I wrote about at the time involved building a protected version of a container image being deployed, by adding the MicroEnforcer executable into it at build time. More recently, my colleague and Aqua’s CTO Amir Jerbi wrote about the various models that are possible for securing Fargate, where he demonstrated how the image-injected code model works

In this post I’ll show how we can achieve similar results by running MicroEnforcer as a sidecar container, so that the image to be protected doesn’t need to be modified. The key to this approach is a lesser-known docker feature made accessible by AWS called “VolumesFrom” that allows one container to mount volumes from another.

Sidecar Containers

The AWS Compute Blog has an example of a sidecar container, and includes a nice definition:

“Sidecar containers are a common software pattern that has been embraced by engineering organizations. It’s a way to keep server side architecture easier to understand by building with smaller, modular containers that each serve a simple purpose. Just like an application can be powered by multiple microservices, each microservice can also be powered by multiple containers that work together. A sidecar container is simply a way to move part of the core responsibility of a service out into a containerized module that is deployed alongside a core application container.”

However, the example approach shown for the reverse proxy demonstrated in that post isn’t quite what we need for our MicroEnforcer. In their use case, the sidecar container intercepts traffic destined for the main application container. For runtime enforcement, we need to intercept the execution of the application itself.

Here’s the plan:

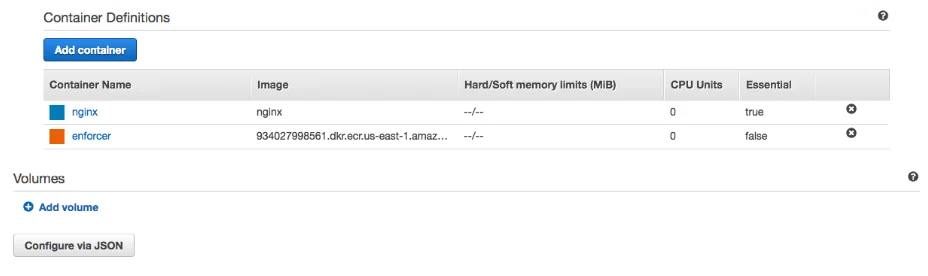

- We’ll create a Task Definition that includes two containers: a sidecar “enforcer” and the main application. For my example I used nginx.

- The sidecar container exposes a volume, which contains the MicroEnforcer binary executable

- The Task Definition for the application container mounts that volume from the sidecar.

- It also overwrites the command for the application container, calling the MicroEnforcer binary and passing in the application command as a parameter – MicroEnforcer will start the application.

Sidecar Enforcer

Here’s the Dockerfile for my “enforcer” image.

FROM alpineADD microenforcer /aqua/microenforcerVOLUME ["/aqua"]When this container is instantiated it’s actually going to run /bin/sh as that’s the command defined in the alpine image. This is very short-lived, but it’s sufficient.

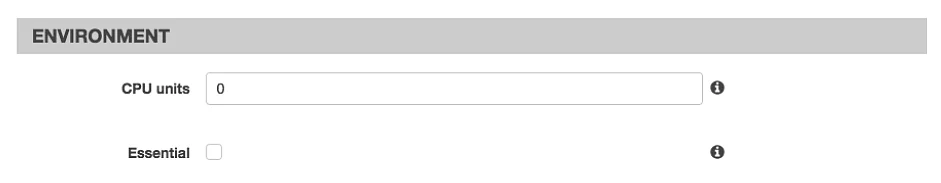

However, because this enforcer container is only going to run briefly, we need to mark it as not being an essential container in the Task Definition. This means that the task will carry on running after this container exits.

The Essential tick-box needs to be unchecked in the UI (or in the JSON definition, this is “essential”: false).

Everything else for this container in the Task Definition is left to its default values.

Application container

The main application also needs to be added to the Task Definition – or if you are already running the app under Fargate you can start from the existing definition. There are two things that need modifying:

VolumesFrom

Now we get to the slightly hidden VolumesFrom feature. I say that this is “slightly hidden” because, at least at the time of writing, it’s not exposed through the AWS Console UI, but you can set it up by configuring the Task Definition JSON. (This parameter also exists on the Docker command line.)

The Configure via JSON button appears at the very end of the Task Definition config screen.

There will be two entries in the “containerDefinitions” field and we need to modify the nginx application one rather than the enforcer.

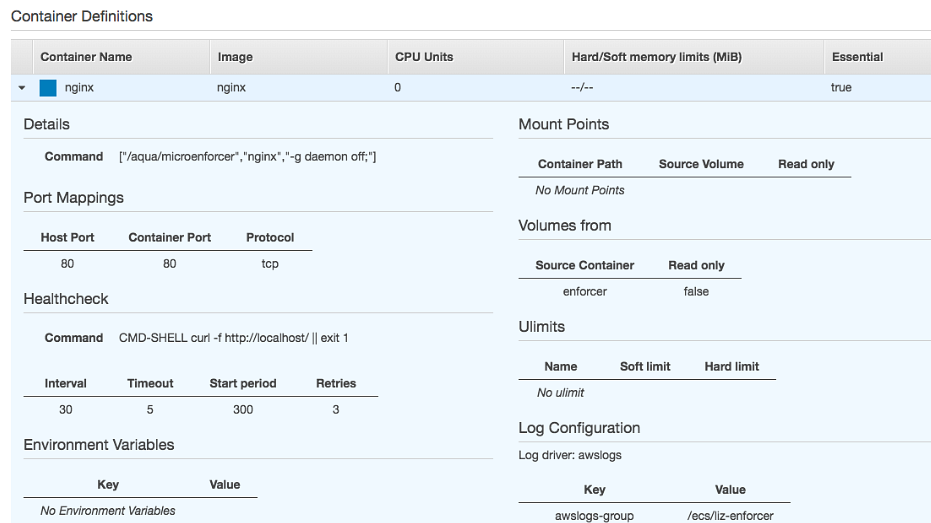

"containerDefinitions": [ {... "volumesFrom": [ { "sourceContainer": "enforcer", "readOnly": true } ],... "name": "nginx" }, {... "name": "enforcer" } ],This has the effect of mounting any volumes exposed by the enforcer container so that they are available in the nginx container. From nginx’s point of view this looks like a directory with the same path that it had in the enforcer.

Call the Enforcer

The contents of /aqua – namely the microenforcer binary – are now available from within the application container. Now all that remains to be done is to call it when the task is started by setting the Command field for nginx to [“/aqua/microenforcer”, “nginx”, “-g daemon off;”]. (Truth be told, it took me a few attempts to figure out how the parameterization deals with quotes!)

Steady State

In many cases where a sidecar container is used, the sidecar needs to exist for the lifetime of the task – this would be the case for the reverse proxy described in the AWS blog I referenced earlier. But for our purposes the enforcer is actually running within the application container. The sidecar container can exit almost immediately, but that doesn’t bring the task down as it’s a non-essential container.

This leaves our application running, based on an image that didn’t need to be modified in any way, but under the protection of Aqua’s MicroEnforcer. In fact in steady state this is to all intents and purposes the same as using the built-in approach, with the enforcer binary running in the application container in both cases. The only difference is that it was loaded into memory from a mounted volume.

Two Approaches – Which to Use?

We’ve seen that we can protect containers under Fargate either by building the MicroEnforcer into the image to be protected, or by running it as a sidecar container and modifying the task definition. Which approach is better suited to which use case in a CaaS environment?

Built-in Approach

It’s simple to use the built-in approach, creating a protected version of an image consistently as part of the CI/CD pipeline. This makes it easy to ensure that every container image is protected wherever it runs (including dev/test environments), and it can be used in any CaaS environment.

Sidecar Approach

With the sidecar approach you can protect an image without changing it. This means you can use precisely the same image under an orchestrator, where the enforcer runs directly on the host, or in Fargate. This could be useful if you are “bursting” to Fargate during busy periods. However, the sidecar approach does need the VolumesFrom feature, which limits its use to Fargate (as far as I can tell, it’s not supported in ACI).

Any cost differences?

Short answer: no! AWS Fargate costs are based on per-minute charges for the resources that a Task requests. You need to specify the CPU and memory per task, but you don’t need to reserve resources for the individual containers. This means that with both approaches the costs should be the same. It’s the same enforcer binary that’s running in both cases, and it’s even running in the same container.