A few months ago I was lucky enough to get my hands on Fargate when it was in preview in the run-up to AWS re:invent. It was immediately clear that it’s a pretty cool concept, and that it presents a new challenge for security solutions like Aqua, because of the lack of a “host” entity on which you can deploy your side-car container.

But before we get into that – having spent some more time with it I wanted to share some additional details that might help if you’re setting up your own tasks and services in Fargate.

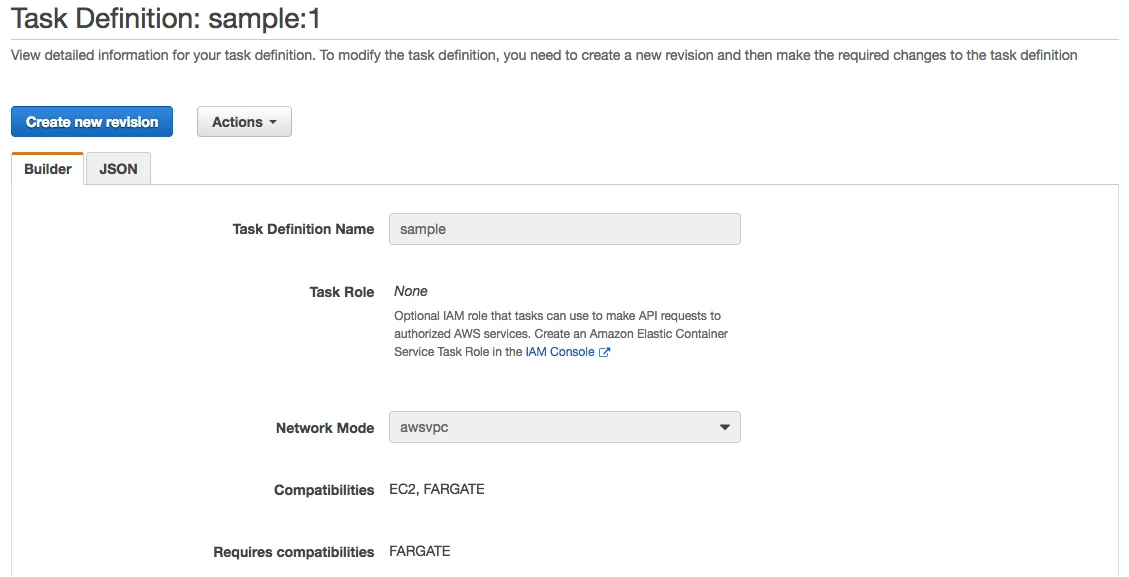

Task Definition

A Task Definition describes the containerized workloads you want to run. Most of this is self-explanatory, but you’ll want to be aware of Networking mode and logging configuration.

Networking mode

If you’re going to run a containerized task under Fargate, the Task Definition must use awspvc networking mode as shown in the following diagram:

We’ll come back to networking later in this post.

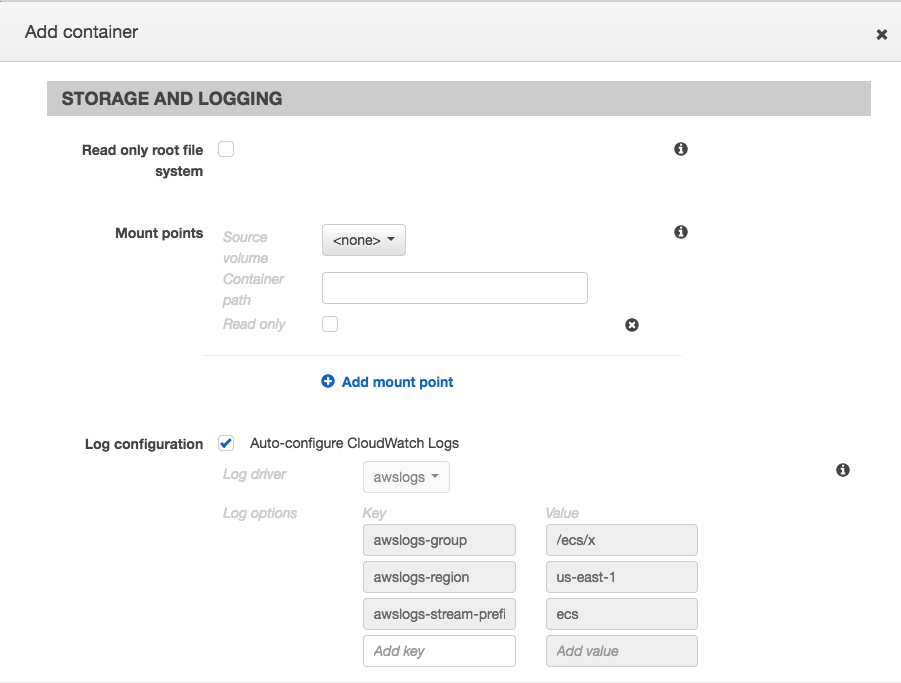

CloudWatch logging

If you’re used to Docker or Kubernetes, you don’t really think about logging at the point where you’re configuring the equivalent of tasks, because the tools allow you to access them by default through kubectl logs or docker container log. But in ECS, you’ll want to set up Cloudwatch logging while you’re configuring the Task Definition, as you won’t be able to add it later. Getting access to your running container to debug it is also going to be tricky, as there is no equivalent of docker / kubectl exec, so getting your log output is the best chance you have of debugging your containers. Fortunately it’s super-easy to set up – look under Storage and Logging when you’re adding a container to a task definition.

Networking

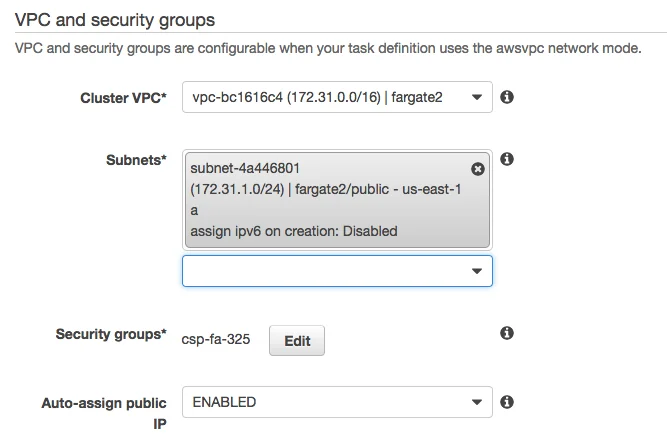

As mentioned above, Fargate tasks have to run in awsvpc networking mode, which means they have to be associated with a VPC. More specifically, they are associated with at least one subnet within a VPC. You do have a couple of options.

Fargate task with public IP address

The simplest set-up is to have a VPC with a public subnet, and then request an auto-assigned public IP address when you create the task. Here’s the relevant section of the Task creation UI:

You can associate your task with one or more subnets. Beware – it is perfectly possible to pick a private subnet and also enable the auto-assigned public IP address. As far as I can tell, this IP address will be utterly useless to you.

The downside of the public IP address is that you’ll get a new one whenever you start a new task. For anything other than the simplest of set-ups you’ll probably find it more convenient to put your Fargate tasks behind a load balancer, so that there is a consistent URL for accessing them from the internet. Fortunately, if you use a Fargate Service to create your tasks, it will automatically register the tasks’ IP addresses as targets for the load balancer.

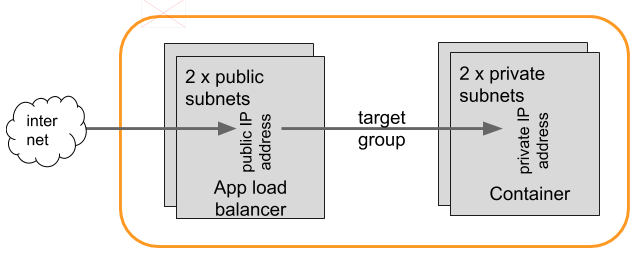

Fargate tasks behind a load balancer

Here’s an example set-up of Fargate tasks running in a private subnet, accessible through an internet-facing Application Load Balancer. This example CloudFormation template sets up the required VPC, subnets and routing tables.

You’ll want different subnets in each pair to be in different availability zones, for high availability. Incidentally it doesn’t seem to be possible to set up an ALB with fewer than two subnets. This may seem unnecessary if you’re not interested in high availability and you just want the functionality of automatically registering task IP addresses, but it makes sense if you consider the original purpose of load balancing.

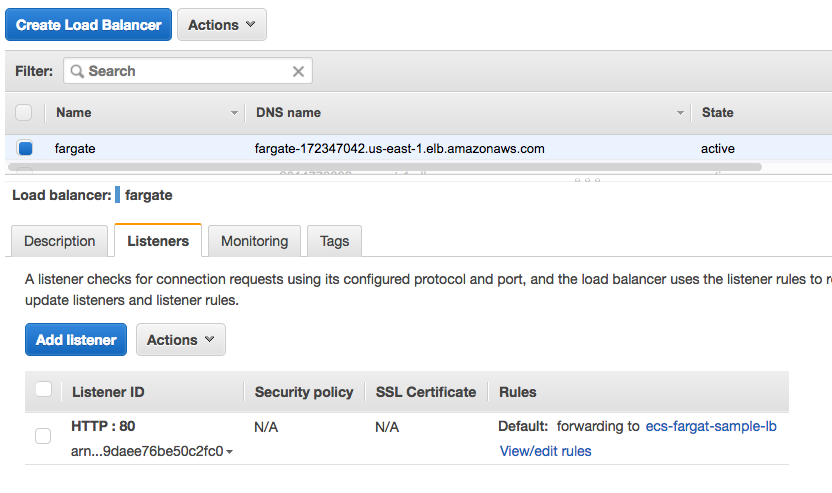

A load balancer can take traffic that arrives on a specific port, and forward it to a Target Group.

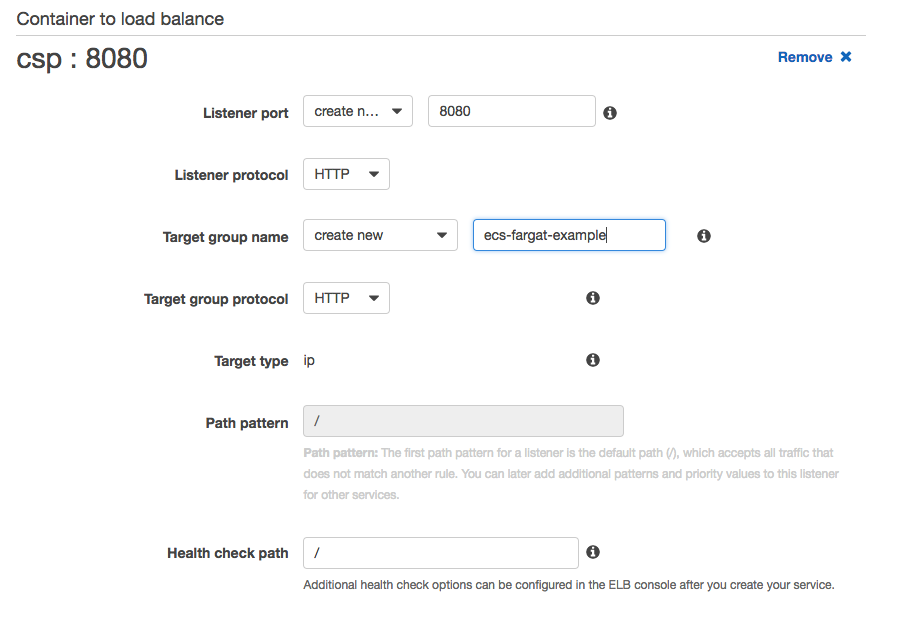

For Fargate, the Target Group consists of the (private) IP addresses of a set of identical Tasks. If you create a Service, you have the option to automatically add a listener to an Application Load Balancer. As Tasks are created to fulfil the requirements of the Service, their IP addresses will automatically be registered to the specified Target Group.

You’ll need to create an internet-facing application load balancer first. One thing to be aware of is that the expectation is that you’ll be routing to different services based on a URL pattern; if you want to route based on the port on which traffic arrives, this seems harder to set up with the wizard. If you try to modify the target groups and listeners manually, it seems likely to break the auto-registration of IP addresses. An easy workaround is to create a separate ALB for the traffic arriving on a different port.

Security groups

Be aware that you will need security groups that permit traffic to flow in the network. They come up in a few different places in the overall configuration:

- On the ALB there needs to be a security group permitting the traffic that’s expected to arrive there from the internet.

- When you create a Task or Service you’ll need another security group that allows traffic from the ALB to the task / service. Bear in mind that this might not be on the same port that it arrived at the ALB!

Health checks

Fargate automatically sets up health checks to determine whether tasks are running or not (and take action as necessary). This is simple as sending an HTTP request to a particular URL and failing if it doesn’t respond with 200 OK within a configurable time frame. You have the option to set up a longer grace period when first creating a Task, which is helpful if there is significant initialization to do before the container is ready to accept traffic.

When you’re setting up a new Service, it can be a good idea to start by setting up an individual Task to make sure that it is all configured correctly, including security groups and health check parameters. If you start with the Service and something is wrong, the health check will very likely fail, so your tasks get taken down and recreated repeatedly, with the concomitant registering and deregistering of addresses in target groups. This isn’t the easiest of environments for debugging!

Scaling and Stopping Services

If you want to stop a Fargate service, it’s not quite as simple as hitting the Delete button. You’ll need to Update the service to scale the number of running tasks to 0. Once you have no active tasks, you can delete the service – though AWS will warn you to delete the corresponding Load Balancer and Target Groups.

Now Let’s Talk About Fargate Security

Because Amazon’s Fargate is a Containers-as-a-Service offering where you can run container instances without having to manage the hosts they run on, it presents a challenge for today’s container security solutions, as there’s no way to access the host to run a separate enforcing “side-car” container or process. At Aqua Security, we have a solution that we’ve named MicroEnforcer™ to handle this type of deployment.

With MicroEnforcer, we embed the enforcement into the container image, making the container self-policing. Let’s see how Aqua’s MicroEnforcer can be added to a Dockerfile to produce a container image that can enforce its own runtime policies.

For this demonstration I’m going to use a web application that exposes a shell through the browser. Generally speaking, this is not something you want to do! But I’m going to limit the exposure by using MicroEnforcer to restrict the set of executables that can be run inside the container.

Add Aqua MicroEnforcer to the Dockerfile

Here’s a Dockerfile for running this application:

FROM golang:latestRUN go get github.com/yudai/gottyENTRYPOINT ["gotty", "-w", "/bin/bash"] |

To protect this container, we modify the Dockerfile to add the MicroEnforcer executable:

FROM golang:latestRUN go get github.com/yudai/gottyADD microenforcer /microenforcerADD gotty/policy.json /etc/aquasec/policy/policy.jsonENTRYPOINT ["/microenforcer", "gotty", "-w", "/bin/bash"] |

In addition to the binary, we add a JSON file that describes the policy we want to enforce. You can export a suitable policy file from the Aqua UI; the important line for the purposes of this demo is the very small set of executables that we will allow to be run in this container:

"allow_executables":["/go/bin/gotty","/bin/bash","/bin/ls","/bin/cat","/usr/bin/which"],

Note that we change the entrypoint for the container to call the microenforcer binary, which then calls our original gotty executable. The MicroEnforcer will use the policy definition to limit what is permitted inside the container instance.

Run the container

In AWS we create a Task Definition that uses the image built from the protected version of the Dockerfile. It also has the IP address of the Aqua server, and the token we just obtained, passed in as environment variables, so that the MicroEnforcer can connect to the server.

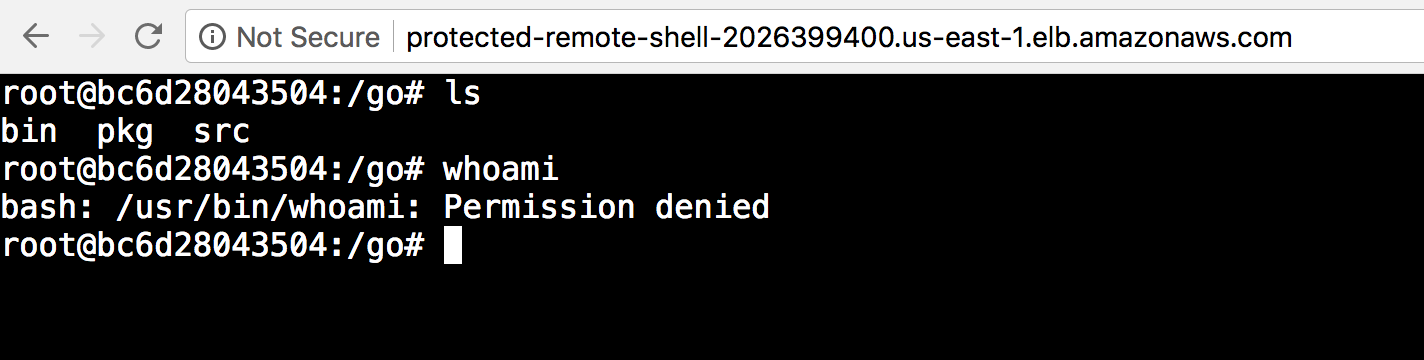

Run commands in the shell

My web application is running in AWS as a Fargate service, accessible through a Load Balancer set up as described in a previous post.

Visiting this address, we can see shell access! And we can run commands in it! But the good news is that we can only run commands that are permitted by the policy file we added to the Dockerfile.

The ls command was permitted, but whoami was blocked because it’s not in the set of permitted executables. For the short period of time while I’m running this demo, anyone could visit this site and run shell commands on this container – but they’ll see a Permission Denied error for anything outside that very limited set that are allowed by the policy for this particular container.

The Runtime Security Solution for AWS Fargate and Azure Container Instances

I’ve shown here how Aqua can protect your running containers in a Containers-as-a-Service environment, in this case using AWS Fargate but it applies to Azure Container Instances as well. In this example we limited the set of executables that the container could run, but you can use MicroEnforcer to enact the same set of policy restrictions as the regular Aqua Enforcer, including limiting network traffic, user IDs and so on. The MicroEnforcer can also be configured to communicate with the Aqua Server to report audit logs.

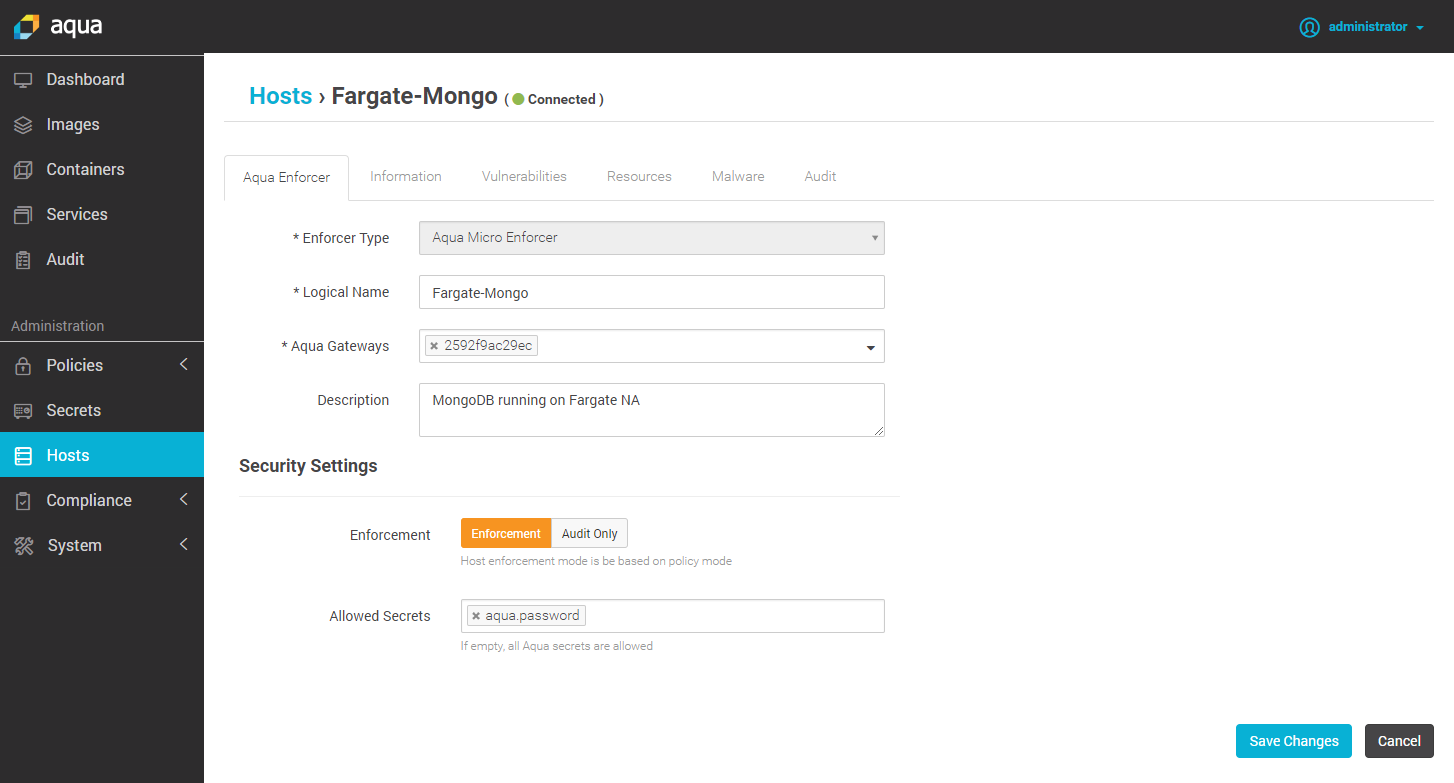

In the Aqua Command Center this is easy to set up, and is basically considered at type of “host” to which you deploy, choosing MicroEnforcer as the enforcer type:

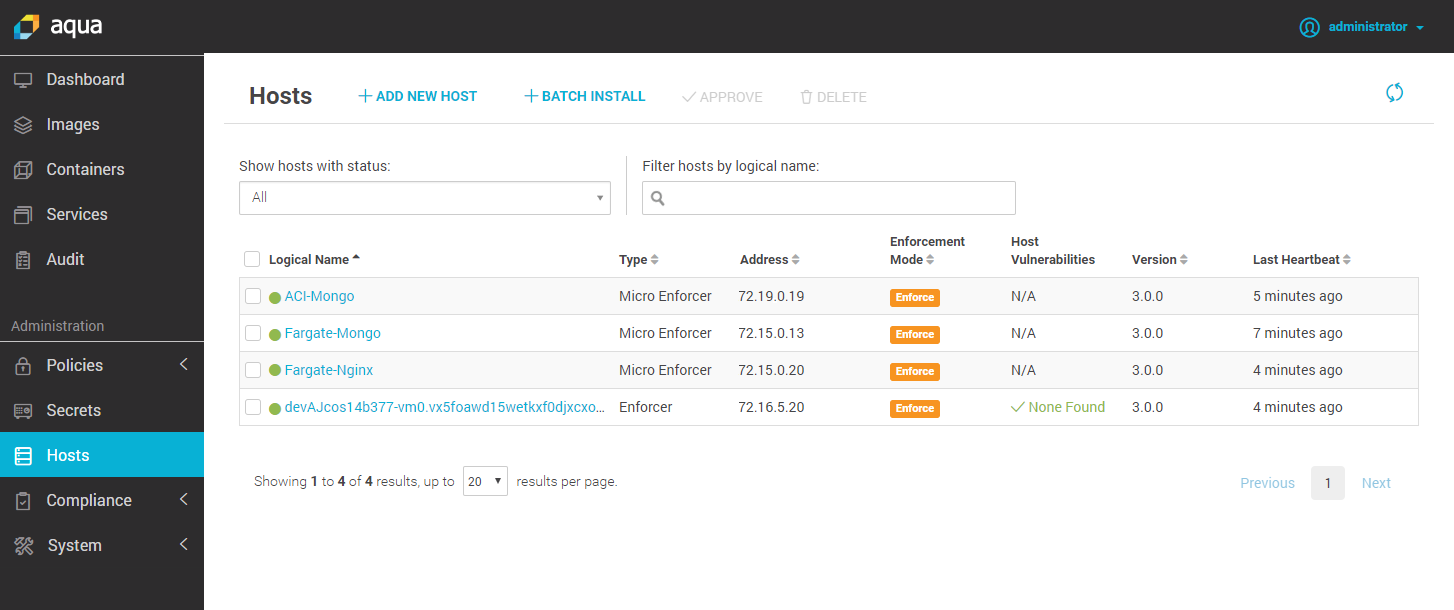

Then, when you look at the Hosts screen, you can see the MicroEnforcers side by side with the “regular” side-car container Enforcers.

MicroEnforcer is now available as part of Aqua’s Container Security Platform 3.0. Why not speak to one of our friendly sales team about your requirements for securing CaaS?