One of the fundamental questions in container security, since the early days of Docker, is whether a container constitutes a security boundary. In this first part of a two-blog discussion of containers and isolation, we take a look at the security boundary question, along with key examples. Part II will continue with the nuances of container isolation techniques.

Defining a security boundary

First, we need to define “security boundary.” According to Microsoft’s Security Servicing Criteria, which contains the company’s criteria for devoting attention to vulnerabilities, “A security boundary provides a logical separation between the code and data of security domains with different levels of trust. For example, the separation between kernel mode and user mode is a classic and straightforward security boundary.” And the CISSP certification materials note: “A security boundary is the line of intersection between any two areas, subnets, or environments that have different security requirements or needs. A security boundary exists between a high-security area and a low-security one, such as between a LAN and the Internet.”

Critically, the need for third-party controls cannot be used as a criterion for defining a security boundary. Instead, the CISSP materials state, “Once you identify a security boundary, you need to deploy controls and mechanisms to control the flow of information across those boundaries.”

The verdict from the major cloud providers

A quick search online for the topic will show what appears to be a verdict from two of the biggest cloud providers, Google and IBM/Red Hat, that, no, a container is not a security boundary. Here we are explicitly referring to Linux containers, which isolate based on processes (more about this in Part II). Google goes on the record in its blog, stating that a container (without naming Linux or Windows) is not a security boundary, “A container isn’t a strong security boundary. They provide some restrictions on access to shared resources on a host, but they don’t necessarily prevent a malicious attacker from circumventing these restrictions.”

Dan Walsh of Red Hat has for some time made a point of saying, “Containers do not contain.” In his own words, “Stop assuming that Docker and the Linux kernel protect you from malware.”

The verdict from independent practitioners and container experts

Practitioners and other independent container experts provide nuanced and in-depth demonstrations of isolation in container environments, using container properties. They highlight the ways to enforce container isolation using layers of container defaults, with a warning about the consequences of mistakes. Experts demonstrate the complexity of the layers and controls required to do this properly.

For example, from a practitioner’s perspective, Netflix raves about its success with employing user namespaces, a native container capability, as an additional element of its security boundary, to the point that it was not affected by CVEs that would have granted containers unintended privileges. But Netflix’s method was not explained without an acknowledgement of the risks: “Despite the risks, we’ve chosen to leverage containers as part of our security boundary.”

And one of the early container security pioneers, Jessie Frazelle, touts the strength of container security defaults versus container sandboxing techniques (which will be introduced in Part II).

The devil is in the details

A short analysis of the fundamental security properties available natively in containers, their intent, and how they can be bypassed sheds more light on the subject.

Aligning security layers

Frazelle states, “In Docker, we worked really hard to create secure defaults for the container isolation itself. I then tried to bring all those up the stack into orchestrators. Container runtimes have security layers defined by seccomp, AppArmor, kernel namespaces, cgroups, capabilities, and an unprivileged Linux user. All the layers don’t perfectly overlap, but a few do.”

An example in which the container runtime security layers don’t overlap well, creating an easy way to bypass container isolation, is the seccomp filter. Part of the default Docker security model is having a seccomp filter that stops specific access to the Linux kernel. However, when used in Kubernetes, this filter is disabled by default, which can allow specific attacks, such as Mark Manning’s keyctl-unmask, to work.

The gaps in overlap between the different security layers for containers are enough, in and of themselves, to conclude that containers do not comprise security boundaries.

Gaining full visibility

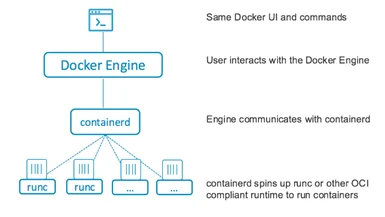

One of the additional challenges of standard Linux containers is that there are a number of programs used to create and manage them. For example, runc is used for launching containers and containerd is used for managing them, and then tools such as Docker can add developer-friendly functionality.

Many users might not be fully aware of all the programs, especially as container tools such as Docker aim to automate and make running containers less complicated. For example, containerd would be installed by Docker for runtime automatically, so a user might not be aware of the relevance of the ContainerDrip vulnerability, which could leak users’ credentials if they were to run an untrusted container image.

Frazelle says, “To be truly secure, you need more than one layer of security, so that when there is a vulnerability in one layer, the attacker also needs a vulnerability in another layer to bypass the isolation mechanism.” This is great advice, but awareness of all the layers is first necessary to enforce any kind of security boundary. And many container tools were not created with the intent of providing this awareness and visibility. At the same time, many practitioners do not know enough to understand the importance of gaining this visibility.

So perhaps the more important point to consider is, “What are the ramifications if our team is thinking of containers as a security boundary?”

Security layers that move

Sometimes vulnerabilities in the different programs can change where the security layers do and don’t overlap. For example, a recent vulnerability in the runc project used by Docker (and other runtimes like CRI-O) could allow for anybody (including attackers) creating pods to gain privileged access to the root of the cluster nodes. Users may not be aware they use runc, and even if they are aware, they might not be aware of this particular method of gaining privileged access.

Conclusion

Will a team that believes a container is a security boundary be motivated to achieve maximum security and container isolation using native controls? A few simple examples demonstrate that the complexity of setting up a container as a true security boundary is not trivial, requiring a great deal of expertise in the components of containers and how the security layers do or don’t overlap. And, in the end, the security layers still do not fully overlap, eliminating any real argument that containers might somehow form a security boundary.

Various container isolation techniques have been constructed to help with these challenges and provide more stringent boundaries. Stay tuned for Part II in this series to learn more about these techniques.